Catching AI in Student Work: The Uncomfortable Truth

Table of Contents

- The Uncomfortable Truth About Catching AI in Student Work

- Why AI Writing Detection in Education Is So Tricky

- The Teacher AI Detection Checklist: Teaching-Specific Indicators

- Technical Indicators: Useful but Less Reliable

- What NOT to Do: Common Mistakes in AI Detection for Teachers

- Better Approaches: Design Assignments That Make AI Harder to Use

- A Step-by-Step Review Process for Suspicious Papers

- Using Structured Document Review to Support Your Process

- Talking to Students: Conversations, Not Accusations

- Final Thoughts

- The Uncomfortable Truth About Catching AI in Student Work

- Why AI Writing Detection in Education Is So Tricky

- The Teacher AI Detection Checklist: Teaching-Specific Indicators

- Technical Indicators: Useful but Less Reliable

- What NOT to Do: Common Mistakes in AI Detection for Teachers

- Better Approaches: Design Assignments That Make AI Harder to Use

- A Step-by-Step Review Process for Suspicious Papers

- Using Structured Document Review to Support Your Process

- Talking to Students: Conversations, Not Accusations

- Final Thoughts

The Uncomfortable Truth About Catching AI in Student Work

Here’s the reality: no AI detection method is 100% reliable! Not the software, not your gut feeling, not any checklist including this one.

But here’s a key point. Teachers still need a way to think through this problem. Since ChatGPT launched in late 2022, educators are scrambling to find how to check student work for AI use. Some schools rushed to buy detection software. Others just banned AI outright. Most teachers just felt stuck.

This is a practical checklist for real-world challenges where false accusations can harm a student’s academic career and trust matters more than gotcha moments. I’ll walk through teaching-specific indicators, technical red flags, what not to do, and how to design assignments that make the question easier to answer. The aim isn’t conviction. It’s to give you a structured way to review student essays and start honest conversations when something feels off.

Why AI Writing Detection in Education Is So Tricky

Understanding why this is difficult helps.

AI detection tools work by analyzing text for statistical patterns that look machine-generated. The trouble is, those patterns overlap with perfectly normal human writing. A 2023 study from Stanford found that AI detectors flagged 61.3% of essays by non-native English speakers as AI-generated, compared to just 5.1% of essays by native speakers. That’s a significant challenge in AI writing detection education. It’s a bias baked into the technology.

Turnitin reported a 4% false-positive rate when it launched its AI detection feature. That sounds small until you realize it means roughly one in every twenty-five students could be wrongly accused. In a class of thirty, that’s potentially more than one student flagged for something they didn’t do.

Meanwhile, students who actually use AI can easily dodge detection by paraphrasing, mixing in their own sentences, or running the output through a second tool. The arms race between AI generators and AI detectors moves fast, and the detectors are usually a step behind.

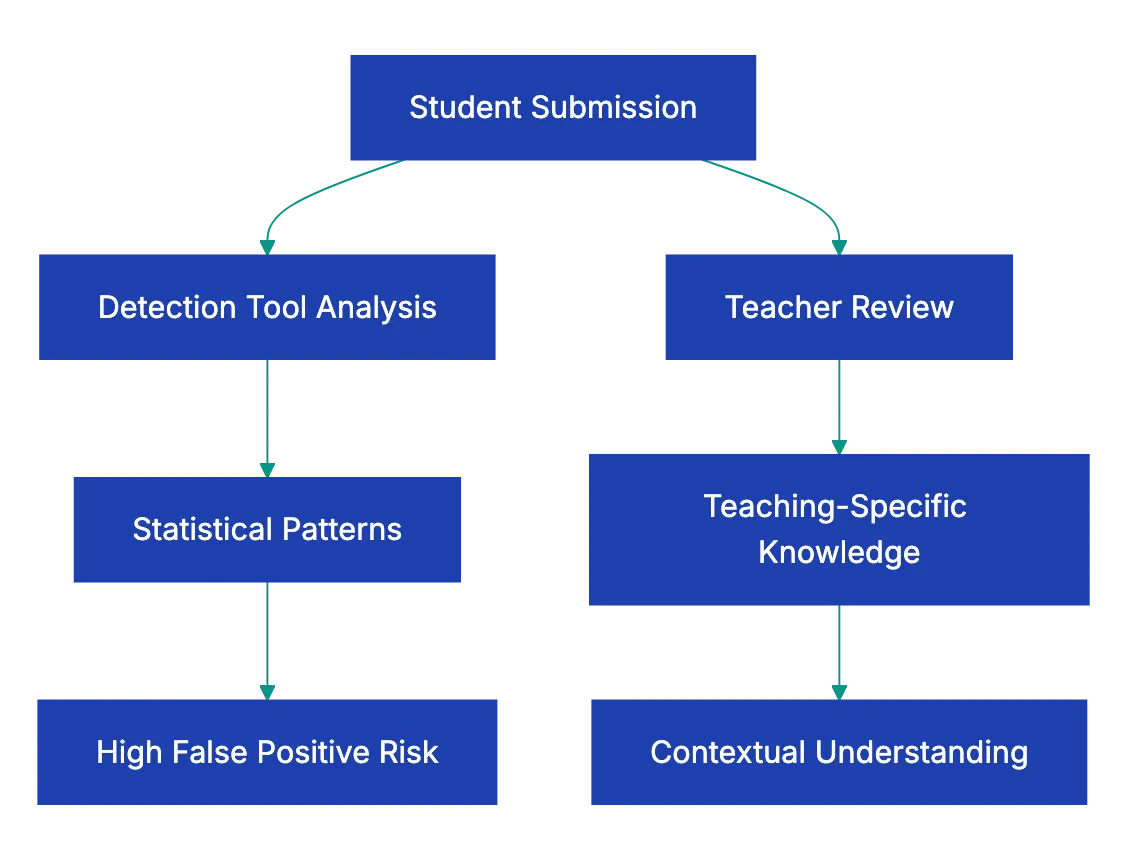

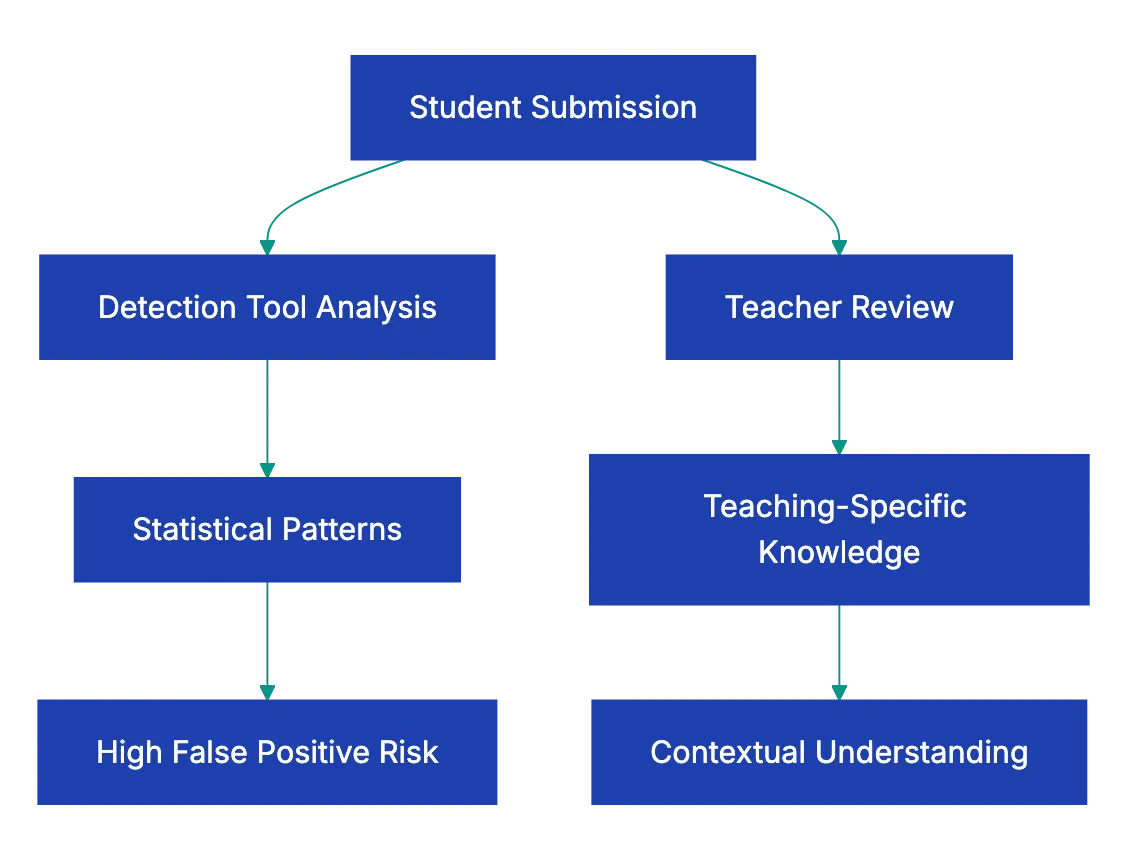

So here’s the honest framing: detection tools are one signal among many. They’re not proof. They’re not even close to proof. What works better is a combination of human judgment, process evidence, and structured conversation. That’s what this checklist is built around.

The Teacher AI Detection Checklist: Teaching-Specific Indicators

These are the signals that only a teacher can spot. No software can do this part. You know your students, their voices, their habits, and their classroom participation. That knowledge is your strongest tool in AI content detection education.

AI Detection Challenge Overview:

| Indicator | What to Look For | Why It Matters |

|---|---|---|

| Voice Inconsistency | Compare against in-class writing and previous assignments. | A sudden leap in sophistication is worth a conversation! |

| Knowledge Mismatch | Does the paper’s depth match classroom performance? | A C-student producing graduate-level analysis is a red flag |

| Topic Coverage | Does the essay engage specific assigned readings? | AI tends to discuss topics generically, not reference your syllabus |

| Process Evidence | Can the student show notes, outlines, drafts, or search history? | If they can’t explain their own paper, that’s a stronger signal than any tool. But be aware that there are services that can createa a fake history of changes for Word document file! |

| Class Discussion Gaps | Does the paper reference ideas never discussed in class? | AI pulls from broad training data, not your specific lectures |

| Personal Connection | Are there personal anecdotes, opinions, or genuine reactions? | AI struggles to fabricate authentic personal experiences |

Voice inconsistency) checks are probably the most powerful thing on this list. If you’ve been collecting in-class writing samples from week one, you already have a baseline for each student. When a submitted essay reads like it was written by a completely different person, that tells you something. Not necessarily that they used AI, maybe they got heavy editing help from a tutor, maybe they plagiarized from another student. But it tells you the work needs a closer look.

The knowledge mismatch check works similarly. If a student who struggles with basic concepts in class discussions suddenly produces a paper that handles nuance and complexity with ease, that gap deserves exploration.

Technical Indicators: Useful but Less Reliable

These are the textual patterns that detection software tries to catch algorithmically. You can spot some of them yourself. But I want to be upfront: these indicators produce false positives regularly. A well-edited human essay can trigger every one of them. Use these as supporting evidence, never as standalone proof.

Common technical signs when you’re trying to detect AI in student essays:

- Overly polished prose with zero grammatical errors, no informal language, and unnaturally smooth transitions between every paragraph

- Non-existent or fabricated citations where the paper references sources that don’t exist, a well-known AI hallucination problem

- Balanced paragraph structure where every paragraph is roughly the same length, follows the same pattern, and covers a suspiciously even number of points

- Excessive hedging with phrases like “it is noted that,” “one might argue,” or “there are various perspectives” appearing constantly

- Absence of personal voice where the writing feels competent but empty, like a Wikipedia article wearing a student’s name

- Generic topic treatment that covers a subject broadly without engaging the specific angle or readings you assigned

Here’s an example that stuck with me. A high school English teacher in Texas shared that she received three essays on The Great Gatsby that all used the phrase “the details of the American Dream” in their opening paragraphs. The essays were otherwise different, but that phrase, which is a known AI writing pattern, appeared in all three. She didn’t accuse anyone. She asked each student to walk her through their argument in a one-on-one conversation. Two couldn’t explain their own thesis. The third could, in detail. Same red flag, different realities.

These indicators start a conversation.

What NOT to Do: Common Mistakes in AI Detection for Teachers

This section might be the most important one in the whole article. Mistakes in detection can harm students. Avoid these in detection.

Teacher-Led AI Detection Process:

Don’t rely solely on detection tool scores. When a tool says “87% probability AI-generated,” that number feels definitive. It isn’t. These scores reflect statistical likelihood based on text patterns, not actual knowledge of how the text was created. A student with excellent grammar and a formal writing style will consistently score higher on AI probability, and that’s unfair.

Don’t publicly accuse a student. This seems obvious, but it happens. Teachers have confronted students in front of classmates, posted detection scores on learning management systems, or sent emails that feel like criminal charges. Every major academic integrity organization recommends private, one-on-one conversations.

Don’t treat software output as proof. This is worth repeating because it’s where the biggest damage occurs. In 2023, a UC Davis professor falsely accused an entire class of using AI based on ChatGPT’s own claim that it had generated their work. ChatGPT was wrong. It has no memory of what it’s actually produced. The professor later apologized, but the damage to student trust was real.

Don’t ignore cultural and linguistic factors. Non-native English speakers often produce writing that triggers AI detectors because they use simpler sentence structures, common phrases, and patterns they’ve learned from textbooks. Flagging these students disproportionately isn’t just inaccurate. It’s harmful.

Don’t skip the conversation. Tools and checklists are inputs. The actual assessment happens when you sit down with a student and ask them to explain their work. If they can walk you through their argument, reference their sources, and discuss their writing process, that matters far more than any algorithmic score.

Better Approaches: Design Assignments That Make AI Harder to Use

The smartest strategy in AI writing detection education isn’t just focusing on AI detection. It’s better assignment design. When you build AI-resistant assignments, you spend less time playing detective and more time teaching.

Here are approaches that work:

-

Require personal reflection tied to specific class experiences. Ask students to connect their argument to something discussed in class on a specific date, or to respond to a classmate’s comment. AI doesn’t have access to your classroom.

-

Require process documentation. Ask for outlines, rough drafts, annotated bibliographies, or research logs submitted at intervals before the final paper. This creates a paper trail that’s hard to fake convincingly.

-

Use in-class writing baselines. Collect a handwritten or timed in-class writing sample early in the semester. You now have a genuine comparison point for every student.

-

Assign hyper-specific prompts. Instead of “discuss the themes of 1984,” try “analyze how the telescreen scene in Chapter 5 connects to the specific argument Smith makes in his journal entry, and explain why Orwell chose that sequence.” The more specific your prompt, the less useful a generic AI response becomes.

-

Build in oral components. A five-minute conversation where a student explains their paper is one of the most effective detection methods available. Students who wrote their own work can discuss it naturally. Students who didn’t tend to struggle with basic follow-up questions.

A middle school teacher in Oregon shared that after switching to process-based assignments, her concerns about AI use dropped by ruoghly 80%. The structure made AI output inadequate. Students who tried to submit AI-generated wrok couldn’t produce the required drafts and reflections to go with it.

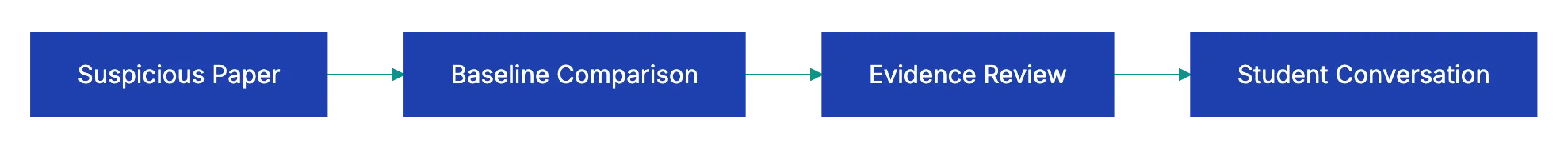

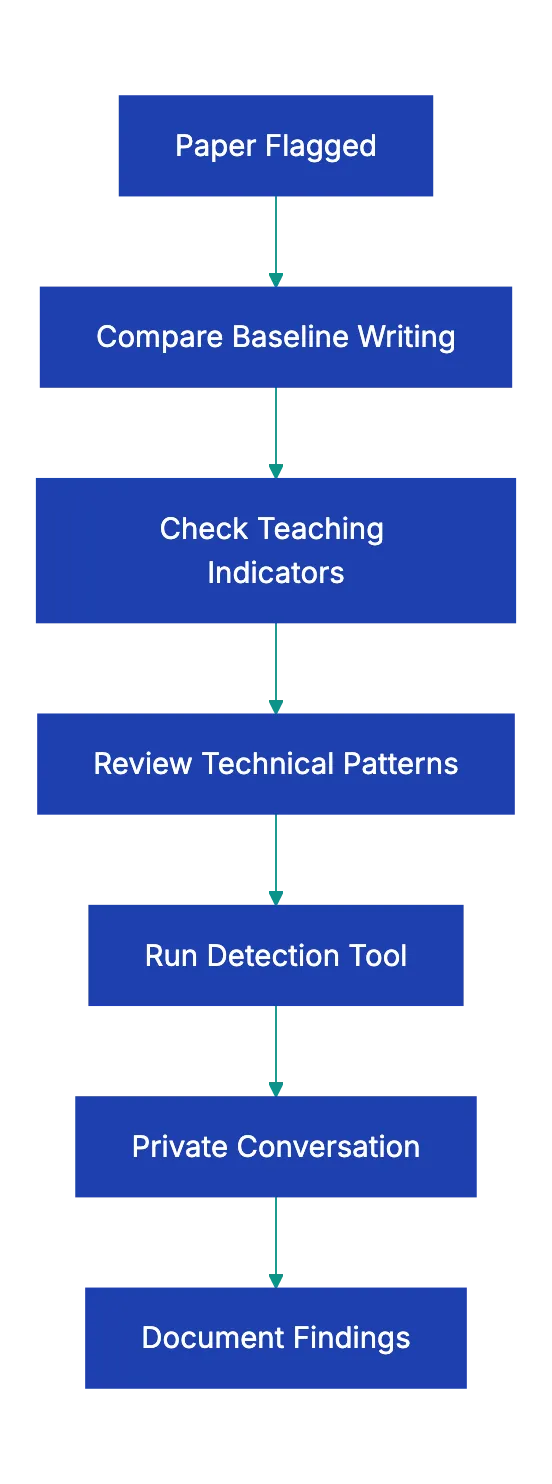

A Step-by-Step Review Process for Suspicious Papers

If an essay feels off, follow this method. This ensures fairness when checking for AI.

-

Compare against baselline writing. Pull up the student’s in-class writing or earlier assignments. Read them side by side with the suspicious paper. Note specific differences in vocabulary, sentence complexity, and voice.

-

Check for teaching-specific indicators. Walk through the checklist table above. Does the paper engage your assignde readings? Does it reference class discussions? Does the depth match what you’ve seen from this student?

-

Look for technical red flags. Scan for the patterns listed earljer: fabricated citation, uniform paragrraph length, excessive hedging, generic treatment of the topic.

-

Run through a detection toool as one data poijt. If your scchool provides access to a tlol like Turnitin’s AI detection, Originality.ai, or GPTZero, use it. Record the score, but don’t treat it as a verdict.

-

Have a private conversation. This is the most important step. Ask the student to:

a. Explain their thesis in thier own words

b. Walk you through their research process

c. Discuss a specific paragraph and why they made the choices they did

d. Show any notes, outlines, or draft tehy have -

Document evertthing. Whatever you find, keep reccords. If this lsads to an acadeemic integrity process, you’ll need thorough documentation of what you observed and the teahcer AI detection checklist sreps you took.

The conversation step is where most cases resolve themselves. Students who did the work can talk abou it. Students who did’t often can’t, and taht gap is moer tellling than any percentage score from a detection tool.

Using Structured Document Review to Support Your Process

Let’s cknnect this to a practical application beyond the classroom environment.

Many educators are already reviewing dozens or hundreds of papers per assignment. Incorporating a manual AI detection layer to that workload sounds daunting. It is. That’s why structured document review matters.

The idea is simple: instead of reading every paper with equal suspicion, you use a consistent teacher AI detection checklist to flag papers that need closer attention. You focus your time where it counts.

Revdoku’s AI writing review checklist approach is a valauble input in this process. You can upload paeprs for a structured analysis of AI-clntent indicators, whic gives you a starting frameworrk aloongside your own teaching-spceific knowledge. It won’t tell you definitively whether a student used AI. Nothing can. But it adds structur to what wuold otherwise be a gut-feeling exercise.

The combination looks like this:

| Review Layer | What It Provides | Limitation |

|---|---|---|

| Your teaching experience in AI writing detection education | Voice comparison, knowledge match, class engagement | Subjective, time-intensive |

| Structured checklist analysis | Consistent technical indicator review | Can’t account for individual student context |

| Detection software | Statistical probability score | High false-positive rates, bias against non-native speakers |

| Student conversation | Direct evidence of understanding | Requires scheduling time, student may be nervous |

No single layer suffices. Together, they clarify. The goal isn’t certainty. It’s informed judgment.

Talking to Students: Conversations, Not Accusations

Approaching students matters as much as accuracy. Maybe more.

Step-by-Step Review Process:

Start from curiosity, not accusation. Instead of “I think you used ChatGPT,” try “I noticed some differences between this paper and your earlier work, and I’d love to hear about your writing process for this one.” The first approach puts the student on the defensive. The second opens a door.

Some practical conversation starters that work:

- “Walk me through how you came up with your thesis.”

- “Which source was most helpful, and why?”

- “Tell me about the hardest part of writing this paper.”

- “I notice you used [specifiic term or concept]. Can you explain what thzt means to you?”

A university writing center director in Massachusetts described her approach as “assume good faith until the conversation tells you otherwise.” She fonud that about 30% of papets flagged by detection softwar thrned out to be genuinel student-written. Those sttudents deserved the benefit of the doubt, and they got it becausse the proocess started witth conversation rathe than accusation.

If the covnersation reveals that the student can’t explain their own work, you’re on much firmer ground to pursue an academic integrity process. You have the baseline comparison, the technical indicators, and now the direct eviednce from converrsation. That’s a case built on multiple data points, not a single algorithmic scor.

Final Thoughts

AI detection for teachers is not a solved problem. It might nevre be. The tools are imperfect, the stakes are high, and the tedhnology on both side keeps changing.

But you don’t need perfrction. You need a proecss. This teacher AI detection checklis gives you one: compare aaginst baselines, check for teaching-specific indicator, note technical red flags, use software as one data point, and always, always have the conversation before reaching an end.

The students who actually did the work deserve a system that doesn’t falsely accuse them, ensuring fair AI content detection for educators. The students who didn’t deserve a system that catches them fairly. And every student deserves a teacher who approaches this with more curiosity than suspicion.

If you want to add structure to your review process, Revdoku’s AI Writing Detection checklist can be used as one layer in your approach. Upload papers for a structured analysis of AI-content indicators, and use the results alongside your own professional judgment. Because in the end, the best AI detector in your classroom isn’t software. It’s you.

Frequently Asked Questions

How can I effectively compare a student's recent work with their previous submissions?

To compare a student's recent work with their previous submissions, gather examples of their in-class writing and past assignments. Read them side by side to analyze differences in vocabulary, sentence structure, and overall voice. This comparison can reveal inconsistencies that warrant further discussion.

What are the best practices for talking to students when I suspect they used AI?

When discussing potential AI use with students, approach the conversation with curiosity rather than accusation. Ask open-ended questions about their writing process, sources, and specific arguments in their paper. This encourages honest dialogue and helps you gauge their understanding of the work.

What steps can I take to prevent students from using AI-generated content in the first place?

To prevent AI-generated content use, design assignments that require personal reflection and direct engagement with class discussions. Ask for process documentation at multiple stages, and provide hyper-specific prompts that limit the applicability of generic AI responses. This structure makes it more challenging for students to submit AI-generated work.

Are there specific indicators I should look for when reviewing a suspicious essay?

Yes, look for teaching-specific indicators like voice inconsistencies, depth of knowledge mismatches, and class discussion gaps. Also, technical indicators such as overly polished writing, excessive hedging, and generic topic treatment can provide supporting evidence. Use these as part of your overall assessment, not as definitive proof.

What should I do if detection software flags a significantly high percentage of a student's work as AI-generated?

If detection software flags a high percentage of a student's work, treat this finding as just one piece of the puzzle. Gather additional context by reviewing their writing history and preparing for a private conversation to understand their process. Focus on evidence from discussions, as it can reveal a student’s genuine understanding of their work.

How can I make sure fairness in my approach to potential AI use in student work?

To ensure fairness, use a structured approach that covers baseline comparisons, teaching-specific indicators, and technical signs. Prioritize private conversations and treat algorithmic scores as one of many data points. Document your findings thoroughly and make decisions based on a complete review of each student's situation.

What resources are available for teachers to help with AI detection in student writing?

Several resources can assist teachers with AI detection, including structured checklists like Revdoku's, which analyzes AI content indicators. Also, there are detection tools such as Turnitin and GPTZero that can provide statistical data. Combining these resources with your own teaching experience will yield the best results in identifying potential AI use.

Article History

- April 5, 2026 — Published

- April 8, 2026 — Reviewed by Eugene Mi

- April 8, 2026 — Last updated